In a 20,000-word essay titled The Adolescence of Technology, Dario Amodei, Chief Executive Officer of Anthropic, sets out a comprehensive reflection on where artificial intelligence stands today and where it may be headed. The length alone signals intent. This is not a product announcement or a policy memo. It is an attempt to frame the current moment in AI development as a transitional stage that resembles adolescence rather than maturity.

The central premise is straightforward. Advanced AI systems are no longer experimental curiosities, yet they are not fully reliable institutions either. They display capability alongside volatility. They can reason, code, summarize, and assist in research, yet they still hallucinate, misinterpret context, and produce uneven results. According to Amodei, this combination of strength and unpredictability defines the present stage of AI.

AI as an Adolescent Technology

Amodei argues that frontier AI models resemble adolescents in several respects. They exhibit expanding cognitive reach. They respond to a broad array of tasks. They learn rapidly. At the same time, they require guardrails, oversight, and structured environments. Without discipline and governance, their impact can be destabilizing.

This framing is useful because it moves the conversation away from two extremes that have dominated public discourse. On one side are forecasts of imminent superintelligence. On the other are dismissals that reduce AI to an overextended automation tool. The adolescence analogy accepts that the systems are consequential while acknowledging that they remain incomplete.

For executives, the more practical implication is this: we are operating in a window where capability is increasing faster than institutional readiness.

Capability Growth and Compressed Timelines

Amodei also outlines his belief that AI progress is accelerating along multiple dimensions at once. Model scale, reasoning depth, multimodal integration, and autonomy are advancing in parallel. He suggests that the path to highly capable systems could unfold more quickly than many policymakers and business leaders expect.

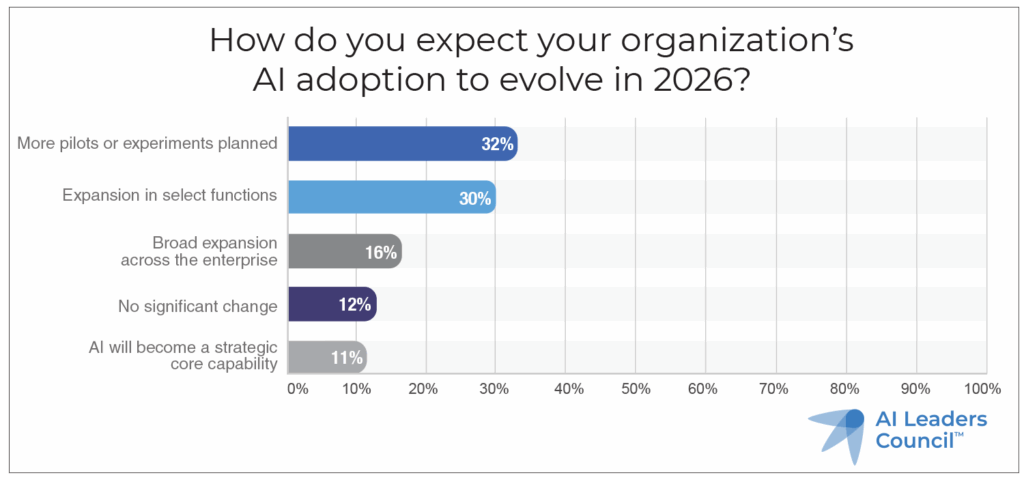

This perspective aligns with what we have observed in our own research. In the AI Leaders Council’s 2026 Corporate AI Outlook Study, a majority of corporate respondents indicated that AI initiatives are moving from pilot programs into operational workflows. Many organizations expect measurable productivity gains within the next twelve to eighteen months.

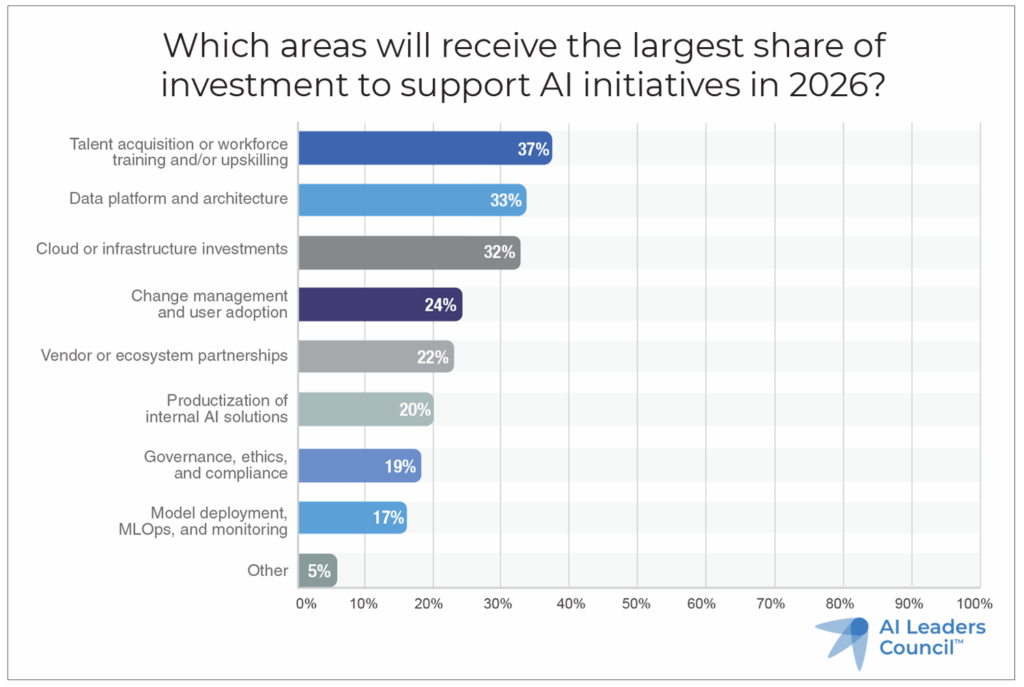

At the same time, the study highlights a structural gap. While enthusiasm for AI investment is rising, governance, data architecture, and workforce preparation lag behind. That imbalance reinforces Amodei’s broader point. The technology’s progression may be outpacing the maturity of the systems meant to manage it.

Risk, Safety, and Institutional Responsibility

A substantial portion of Amodei’s essay addresses safety. He contends that as models become more capable, the consequences of misuse or misalignment increase proportionally. He advocates for structured safety research, red teaming, and evaluation frameworks that evolve alongside model performance.

From a corporate vantage point, this conversation often feels abstract. However, the operational version of AI safety is not theoretical. It concerns data leakage, regulatory exposure, reputational damage, and model outputs that influence financial or clinical decisions.

Our research indicates that many organizations still lack formal AI risk frameworks. In the 2026 AI Outlook Study, a significant segment of respondents reported that AI governance remains either informal or under development. That statistic should not be interpreted as negligence. Rather, it reflects the speed at which AI capabilities have entered mainstream business processes.

Amodei’s argument underscores the need for parallel development. Model intelligence and institutional controls must evolve together.

Economic Transformation and Workforce Adjustment

Another theme in the essay concerns economic change. Amodei acknowledges that advanced AI systems may alter labor markets, particularly in knowledge work. He stops short of deterministic predictions, yet he clearly anticipates material shifts in how tasks are performed.

This is consistent with what we are hearing from AI Leaders Council members. The conversation has shifted from “Should we adopt AI?” to “Which functions will be redesigned first?” Finance, legal research, software development, customer support, and compliance analysis are frequently cited as early transformation zones.

The relevant question for executive leadership is not whether roles will change. The question is how organizations will manage that transition. Upskilling, job redesign, and human oversight structures are no longer peripheral considerations. They are central to AI return on investment.

Strategic Implications for AI Leaders

Amodei’s essay is philosophical in tone, yet it carries operational implications. If AI is indeed in its adolescence, then leadership decisions during this period will shape long term outcomes.

Several strategic considerations emerge:

- Invest in evaluation, not only deployment. Capability without measurement introduces risk.

- Build governance in parallel with experimentation. Retrofitting controls after scale is more difficult.

- Prioritize data quality. Advanced models amplify both structured insight and structured error.

- Prepare the workforce deliberately. Productivity gains depend on human adoption and fluency.

In our own community, the organizations reporting the most progress share a common trait. They treat AI as a cross functional initiative rather than a narrow technology project. Legal, compliance, HR, and operations are involved early. That approach reflects institutional maturity rather than technological novelty.

A Transitional Moment

The broader significance of The Adolescence of Technology lies in its attempt to situate AI within a historical arc. Amodei is effectively arguing that we are neither at the beginning nor at the end. We are in a transitional stage marked by visible capability and incomplete stability.

For AI executives and board members, this framing is constructive. It discourages complacency without endorsing alarmism. It emphasizes stewardship.

From the vantage point of the AI Leaders Council, the central takeaway is pragmatic. Organizations that invest in disciplined experimentation, data governance, and executive level accountability will be positioned to benefit from the next wave of model capability. Those that treat AI as a peripheral tool may find themselves reacting to developments rather than shaping them.

The adolescence phase will not last indefinitely. Institutional habits formed now will influence how the technology integrates into markets, regulatory systems, and corporate strategy. Thoughtful leadership during this period is likely to determine which organizations translate AI potential into measurable advantage.

For ongoing analysis, executive interviews, and research insights, visit https://aileaderscouncil.org/.