As part of our continued deep dive into the 2026 Corporate AI Outlook Study, we are examining how AI leaders are thinking about risk as adoption expands. While investment and usage continue to grow, concerns around governance, accountability, and execution are becoming more prominent in executive discussions.

In this post, we focus on the risks and concerns leaders anticipate most as AI initiatives scale in 2026, and what those concerns reveal about how organizations are approaching responsibility and control.

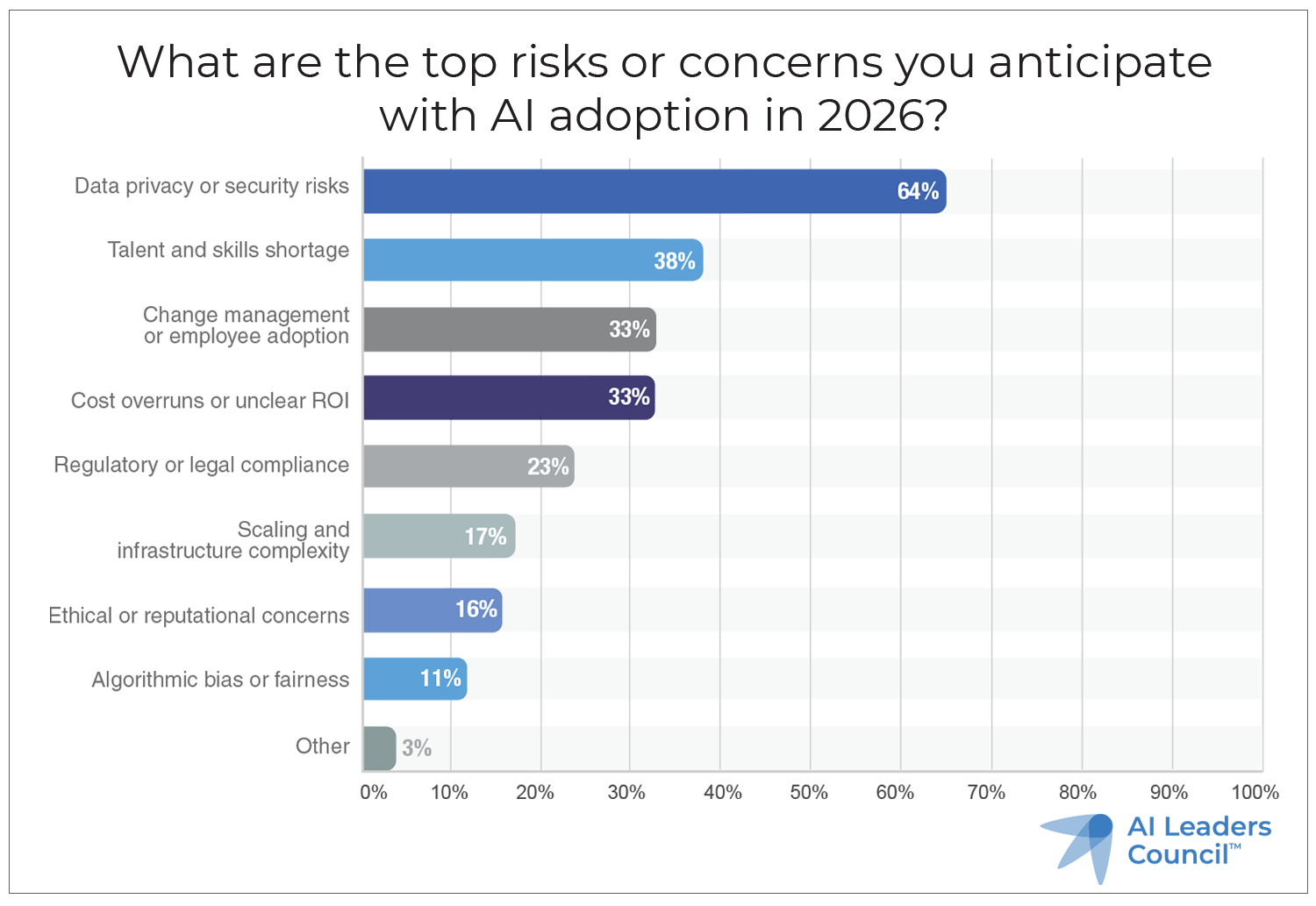

Data privacy and security lead AI risk concerns

Survey results show that data privacy and security risks remain the most frequently cited concern related to AI adoption. As AI systems become more deeply integrated into core workflows, they increasingly interact with sensitive data, customer information, and proprietary business processes.

This concern reflects a broader shift in how AI is viewed. What was once treated as a discrete technology initiative is now understood as an extension of the organization’s overall security and risk posture. AI leaders are being asked to apply the same rigor to AI systems that already exists for other critical platforms.

- Data privacy or security risks: 64%

- Talent and skills shortage: 38%

- Change management or employee adoption: 33%

- Cost overruns or unclear ROI: 33%

- Regulatory or legal compliance: 23%

- Scaling and infrastructure complexity: 17%

- Ethical or reputational concerns: 16%

- Algorithmic bias or fairness: 11%

- Other: 3%

People and AI adoption risks are equally prominent

Beyond technical risk, leaders express strong concern about talent shortages, employee adoption, and change management. These risks often materialize quietly, through underutilized tools, inconsistent usage, or reliance on informal workarounds.

When employees lack confidence or clarity around AI usage, organizations face a dual risk. Value is left unrealized, and unsanctioned or inconsistent use can introduce governance and compliance exposure. Addressing these issues requires education, communication, and leadership engagement, not just policy.

AI ROI uncertainty reflects rising accountability

Cost overruns and unclear return on investment also rank high among anticipated risks. As AI budgets increase, leaders face growing pressure to demonstrate tangible outcomes and justify continued investment.

This concern reflects a maturing view of AI. Leaders are no longer satisfied with proof-of-concept success alone. They are increasingly expected to connect AI initiatives to operational performance, financial impact, and strategic priorities.

AI governance must evolve alongside adoption

Regulatory and legal compliance, infrastructure complexity, and ethical considerations remain meaningful concerns. Together, these risks point to the need for governance frameworks that evolve as AI usage expands.

Organizations that delay governance until after deployment often struggle to regain control. Those that integrate risk management early, align AI initiatives with existing controls, and clarify accountability are better positioned to scale responsibly.

Preparing for responsible AI scale

The risks identified in the study do not suggest hesitation about AI. Rather, they signal a more sober and realistic assessment of what it takes to scale effectively.

The 2026 Corporate AI Outlook Study from the AI Leaders Council explores how leaders are balancing opportunity and risk as AI adoption accelerates. Download the full report to see how organizations are addressing security, adoption, and governance challenges heading into 2026.